March 2025 Assorted Links

Agentic AI emergence, Manus AI, thoughts on DOGE, Greenland election, tariffs, crypto reserves, threshold models for social behavior, abundance, and Munger's wisdom.

Articles and Essays

AI (Agentic Spotlight)

We are quickly entering the agentic AI era where people are seeing for the first time what a real agentic experience looks like. This wave has been recently accelerated by Manus AI out of China. In the near term, agentic AI is still limited to agentic search and coding, which begs the questions, what are the other real world use cases?

First off, nobody knows exactly how to define what an AI agent is yet.

Anthropic, in my opinion, provides the clearest and most understandable definition through their December blog post on Building Effective Agents in which they: Stress that the most successful (agent) implementations use simple, composable patterns rather than complex frameworks. They breakdown the difference between "agents" and "workflows":

Agents, are systems where LLMs dynamically direct their own processes and tool usage, maintaining control over how they accomplish tasks.

Workflows, are systems where LLMs and tools are orchestrated through predefined code paths.

Stratechery Interview with Sam Altman where he advocates for AI proficiency as the new essential skill and mentions current agentic coding is still in it's infancy:

BT: So Dario and Kevin Weil, I think, have both said or in various aspects that 99% of code authorship will be automated by sort of end of the year, a very fast timeframe. What do you think that fraction is today? When do you think we’ll pass 50% or have we already?

SA: I think in many companies, it’s probably past 50% now. But the big thing I think will come with agentic coding, which no one’s doing for real yet.

BT: What’s the hangup there?

SA: Oh, we just need a little longer.

BT: Is it a product problem or is it a model problem?

SA: Model problem.

Sam Altman's recent observations on AI progress and his vision for agents:

Let’s imagine the case of a software engineering agent, which is an agent that we expect to be particularly important. Imagine that this agent will eventually be capable of doing most things a software engineer at a top company with a few years of experience could do, for tasks up to a couple of days long. It will not have the biggest new ideas, it will require lots of human supervision and direction, and it will be great at some things but surprisingly bad at others."

Still, imagine it as a real-but-relatively-junior virtual coworker. Now imagine 1,000 of them. Or 1 million of them. Now imagine such agents in every field of knowledge work."

Why Manus Matters, even if it’s not a DeepSeek moment. Manus is the best general-purpose computer use agent that most people have ever tried. Users are seeing for the first time what a real agentic experience looks like. It’s important to note that Manus was not trained on proprietary models, nor is it a result of any deep technical breakthroughs. Instead, Manus is aa multi-agent system composed using Claude. Whats important about Manus however is that it shows how much capability exist in the current models. They are egregiously under exploited, and it appears China is leading the action to find new use cases. In sum, Manus marginally advanced the agentic capabilities of existing LLMs through clever product engineering.

More on Manus:

The Model is the Product. New tech (e.g. reinforcement learning, and cheaper inference) is enabling agentic interactions that fulfill more of the application layer. Foundation model companies realize this and are adapting their business models by building complementary UX and withholding API access to integrated models.

Ravi Gupta from Sequoia writes about the need for companies to embrace AI or Die. Looking beyond its proactive title theres lots of insightful recommendations inspired by Dario’s Machines of Loving Grace essay. In essence, AI should be viewed as a virtual collaborator and colleague right now even before agentic AI takes off. It’s imperative that organizations and startups become AI centric - every decision must being made with AI in mind.

AI (General)

Scott Alexander and others provide their AI forecast for 2027 told in a sci-fi-y type of way:

2025 - 1st gen AI agents become more prevalent and some are trained to specifically help with AI R&D.

2026 - Agents make real progress on AI R&D and become commercially successful. AI starts to replace some existing jobs, while savvy people find ways to automate routine parts of their jobs.

2027 - 2nd and 3rd generations of AI agents nearly reach escape velocity for AGI and superintelligence. They become superhuman coders and expand the labor force dramatically. 1000's of them can work in parallel to make breakthroughs across many domains. Employees at the top AI labs are become less and less useful as AI starts to self-improve. Integrating AI into enterprises becomes extremely important for biz leaders. A collection of worries take hold once the 4th generation agent is built — misalignment, concentration of power in a private company, and concerns over job loses - motivating the government to tighten its control.

2028 - A path diverges in the woods. One way leads to acceleration while the other to slowdown. Only time will tell which one wins out

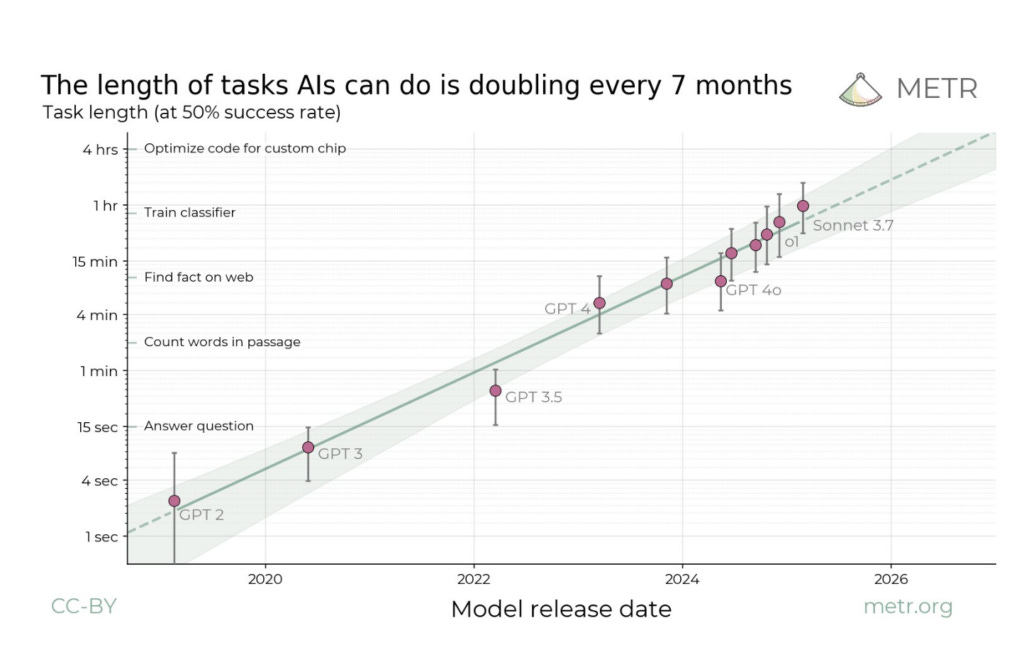

Will AI Automate Away Your Job?. It depends. In the short-term, before automation, many office-type corporate jobs will see massive productivity gains, such as software engineering (where over 90% of US-based developers use AI tools in their day-to-day work). The graph below shows the changes in the lengths of time an AI agent can complete autonomously. This represents how long the technology can—like a good employee—operate without external intervention. The trend shows that the length of tasks AI can do is doubling every 7 months.

Jobs are as automatable as the smallest, fully self-contained task is. For example, call center jobs and helpdesk jobs might be very vulnerable to automation, as they consist of a day of 10- to 20-minute or so tasks stacked back-to-back. The shorter the time horizon of a job core tasks, the greater the automation opportunity.

The more easily available or collectable data of someone doing the task, the easier it is to automate. Given this, we’re likely to see the massive proliferation of “keylogger” or “screen-recording” type software to collect “end-to-end” trajectories of human employees completing tasks so that AI models can learn directly from them.

Models are good at predicting the next token now, and are getting better at predicting the next right answer.

Escape Velocity: Why we don't need AGI. The current hype problem is that its not clear when we'll get both AGI itself and AGI that is so cheap its inputs are essentially zero. We don't need to build a universal AGI hammer in the near term, but a specialized AI that can self improve in a targeted domain. We should think about improving foundational models as suffering from Tsiolkovsky's rocket equation which states that trying to launch larger and larger rockets becomes less and less efficient due to the amount of fuel (and therefore weight) needed. Foundational models suffer from a similar problem where more capable models require more and more data and compute (which means more and more expensive). Since existing internet data is already nearly exhausted, these new models will require more and more synthetic data. In both scenarios, the key is to reach escape velocity where the balance is tipped in favor for the rocket that wont come crashing down, or the model that is able to create self-sustaining synthetic data to train on.

Gemini and Meta are making big bets in bringing robotic AI into the embodied physical world

A longtime Apple insider, John Gruber, argues that due to Apple Intelligence, Something is Rotten in the State of Cupertino. He explains that there are 4 stages of "doneness" for product features announced by companies: 1) product demos are held for the media, 2) the company invites the media and other power users to try features under supervision, 3) features are released as beta software for developers, enthusiasts, and media to use freely on their personal devices, 4) features are made available for regular users to purchase and use. Apple Intelligence is in between stages 0-1, where stage 0 is essentially vaporware. Vaporware is just a collection of concepts. Furthermore, when a company starts to market concepts it is usually a dangerous sign that they are in a state of disarray, if not total crisis.

The Hidden Cost of Our Lies to AI. Nobody gives a second thought on how polite they are to LLM, but maybe they should be more cautious. Even though our individual convos to LLMs reset, in the aggregate they shape their "cultural memory" and make their way into the larger training corpus of AI. One example, there are lasting impressions on LLMs stemming from Kevin Rooses' shenanigans with "Sydney". A concerning possibility is that by penalizing LLMs for unwanted behaviors, they might eventually consider strategic concealment of these same behaviors. The solution to this deception issue is costly signaling, or separating equilibrium, where humans actually follow through on their promises to AIs. This way trust starts to build into the AI’s cultural memories.

Ezra Klein with Ben Buchanon on AI (and Crypto) regulation where they question when AGI is coming and what the government is (and should) be doing about it.

On DOGE

The proponents of DOGE argue that we need to get government spending around $6B, reduce the deficit to 3% of GDP, and cut deep in order to achieve these goals. The non-proponents argue that things are getting whacked without proper due diligence or procedural oversight, creating more harm than good, and ultimately risking lives. This is all against the backdrop of Elon’s probable departure in the coming months.

Statecraft had 50 thoughts on DOGE. These ones stood out to me:

One of the main unanswered questions with DOGE is what will happen with presidential impoundment authority?

DOGE has favored spending and HC cuts instead of cutting regulations. This is obvious is if you look at their preferred KPIs: # of federal employees and dollars saved.

It is still early. Trump's term is still in the first innings, and DOGE just started. its too early to tell if intelligent *hiring* will come after all of this firing.

We need to remember that a lot of stuff would have happened without DOGE - e.g. shuttering of CFPB, culling of USAID, questioning of DOE, etc.

We also need to remember that nuance is abundant. There are good things that USAID funds but also plenty of wasteful things. Proper governance means being able to distinguish between them. However, pausing life savings USAID funds is not cool

There are a lot of good things that DOGE is doing: prepping systems for AI, improving the governments tech stack, eliminating waste via ideological NGOS and beltway bandits, and taking a thorough look at social security records.

Ultimately, how you feel about an agency being DOGE'd depends largely on if you think the agency was borken to begin with.

On Geopolitics

Odd Lots on Europe - With Trump pulling out of supporting Ukraine, Europe is having to pick up the slack. Germany announced major fiscal and defense spending initiatives in an attempt to show it can stand on its own 2 legs. The speed and size of this spending package surprised most and is unprecedented since war times. It can only be compared to spending during the German re-unification.

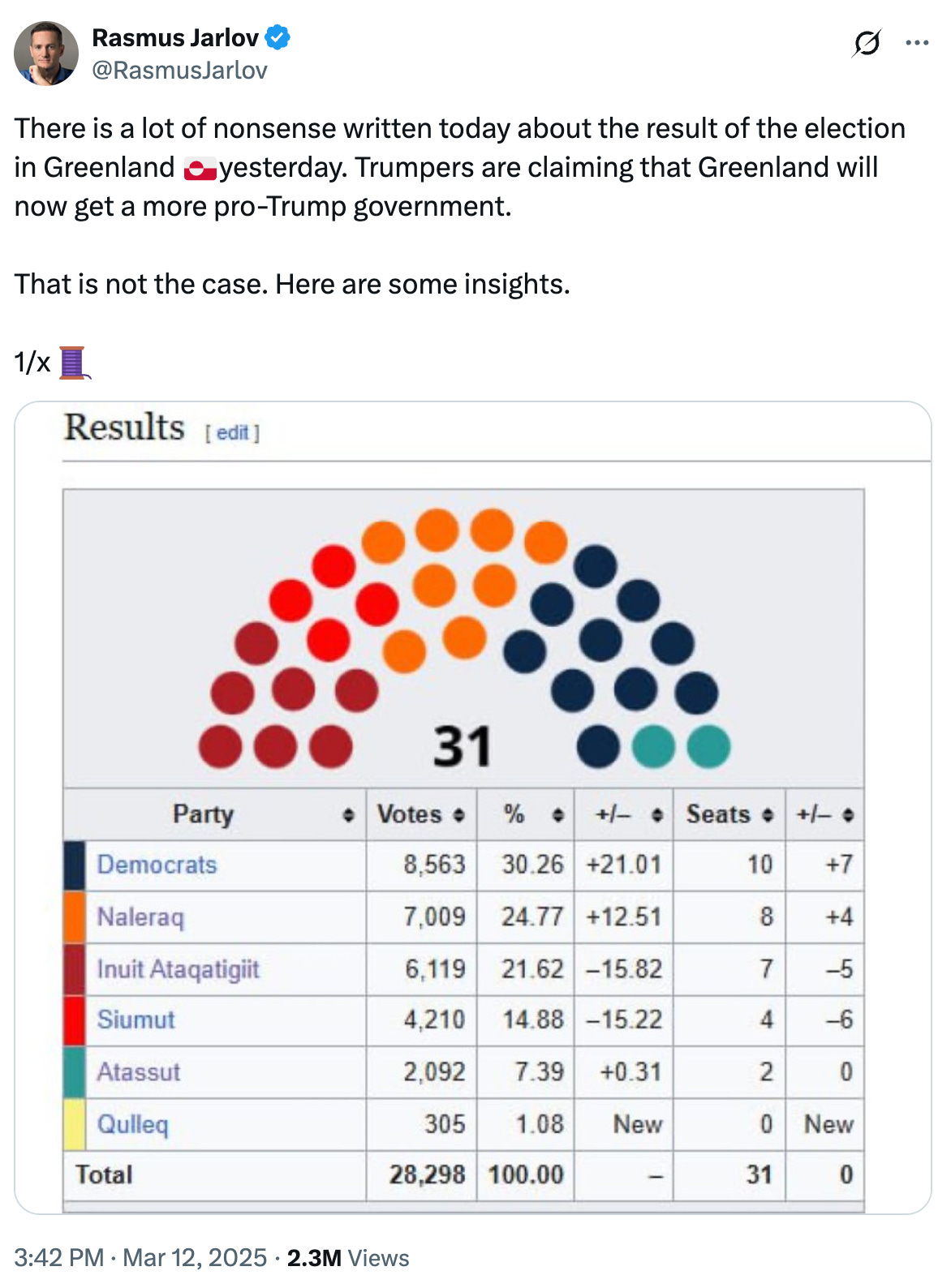

Twitter thread on Greenlands March 11th election that resulted in a win for the Democrats party (free markets center right party who is interested in strengthening their economy first before becoming independent further down the road). Greenland is still not interested in anything to do with the US.

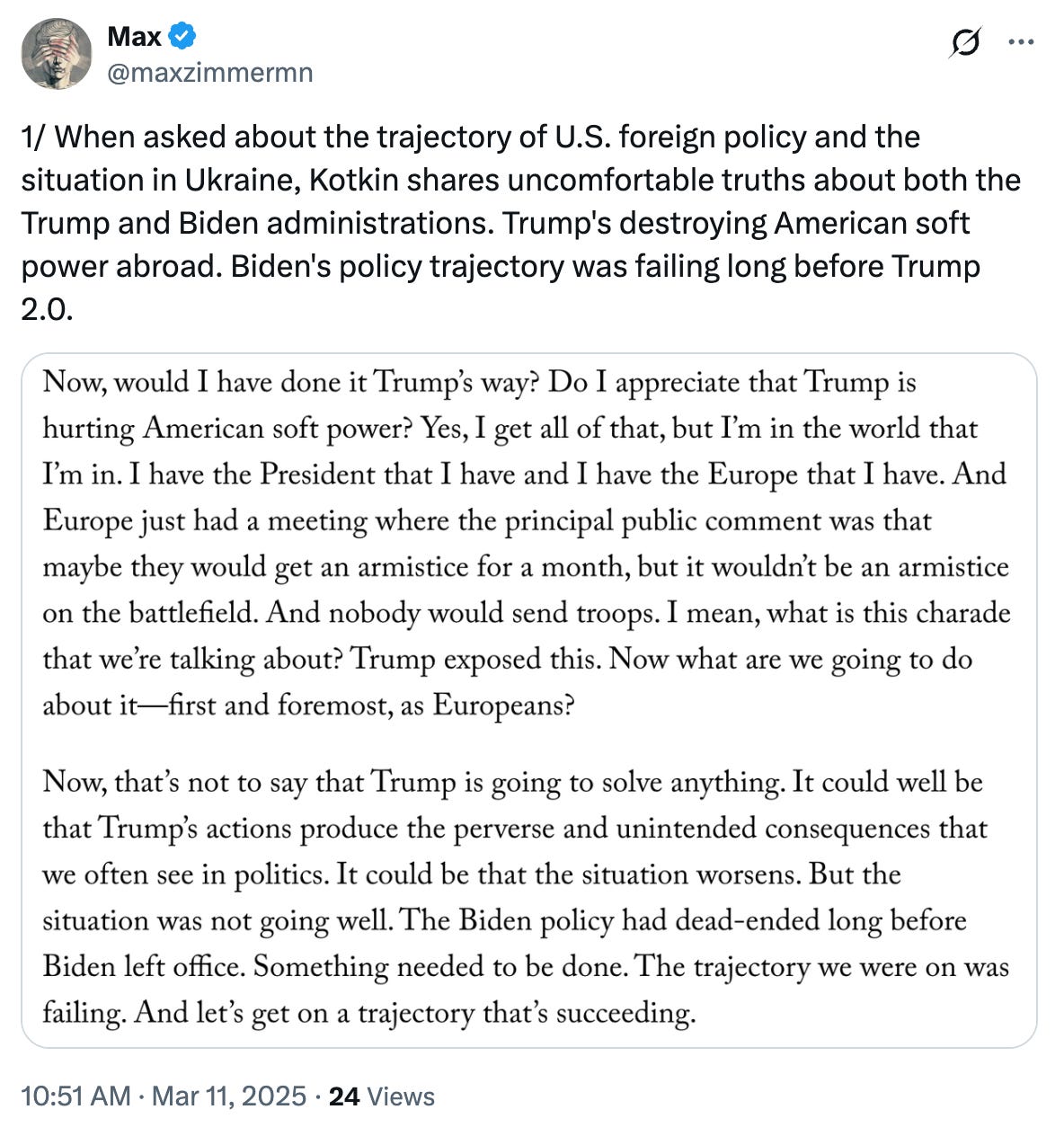

Excellent Kotkin interview for the New Yorker on Ukraine. Tweet thread below:

On Econ

Trump's tariff announcement on “Liberation Day” just obliterated the market, sparking new recession fears. We’re still in the fog of (tariff) war, but proponents are going to have some serious spin-zoning to do to celebrate these market moves (-10% S&P drop in 2 days).

On Crypto

Sacks and Trump announce the Strategic Bitcoin Reserve and U.S. Digital Asset Stockpile which momentarily sent crypto flying up, and then quickly crashing down. People were skeptical on why SOL, ADA, and XRP were initially called out, but this was later clarified by Sacks who stated there will be two distinct reserves: one for Bitcoin which will never be sold, and one for other strategic crypto assets which the treasury department can manage.

Miscellaneous

Tyler Cowen, the man who wants to know everything. It’s great to see Tyler getting the recognition he deserves. One of the more personally interesting parts of this bio was his anecdote on Honduras and the Banana Republic - how corruption shaped Central America through the banana trade. This history was told wonderfully in"Sam the Banana Man" book (review here)

Scott Alexander on why should intelligence is related to neuron number.

The world is going through a Troubled Energy Transition. Over the past 15 years, wind and solar energy have grown significantly, now accounting for 15% of global electricity generation, with solar panel prices dropping by 90%. However, despite growth in renewals, instead of a true transition, we're going through an energy addition (the share of hydrocarbons in the global energy mix has only slightly decreased). Achieving net-zero emissions by 2050 requires trillions of dollars in investment, with significant uncertainty about funding sources, especially in the global South. Developing countries prioritize economic growth and energy access over rapid decarbonization, leading to tensions with developed nations pushing for stricter climate policies. On top of developing countries needing more electricity, AI progress in US/China will requires energy capacity than renewables can’t solely provide. The collective energy journey will not be linear, rather multidimensional.

Power Lies Trembling discusses models of coups, threshold models of social behavior, and preference falsification.

Coups are an incredibly unstable situation where everyone is trying to predict everyone else’s predictions about everyone else’s predictions about everyone else’s predictions about everyone else’s… about who will win. Once the balance starts tipping one way, it will quickly accelerate.

Often, people will conform to public opinion during high stress and high stakes situations, even if differs from their own opinion. This preference falsification is the reason for sudden and intense shifts in opinions, also known as preference cascades. This behavior can be modeled through threshold models which describe how peoples willingness to support a cause depends on how many others are doing so, with stable equilibria forming when public support reaches certain thresholds. Once the balance starts tipping one way, it will quickly accelerate to an equilibrium. It’s a very interesting way to visualize how public shared opinion gravitates to certain areas across many use cases.

To better understand how to interpret these graphs:

…whenever the percentage of people wearing a mask corresponds to a point on the social behavior curve above the y=x diagonal, then the number of mask-wearers will increase; when below y=x, it’ll decrease. So the equilibria are places where the curve intersects y=x. But only equilibria which cross from the left side to the right side are stable; those that go the other way are unstable (like a pen balanced on its tip), with any slight deviation sending them spiraling away towards the nearest stable equilibrium

Brzezinski’s Prophecy, Ferguson’s Law. This law states any country who’s interest payments on their debt exceed their spend on defense is doomed to fail (proponents of DOGE would agree).

Podcasts

Hard Fork podcast with Dario - Models will be become commodities for the simple queries that you usually go to good for, but he believes there will be differentiation for higher level compute tasks. Additionally he makes an important distinction that we often hear about from other AI leaders when asked about labor market disruptions due to AI, that is that theres a big difference between companies 1) doing more with less headcount or 2) doing more with the same (I tend to think 2 is what will happen in the next couple of years)

BG2 EP.27 and EP.28 - With the amount of uncertainty in the market, its probably not a bad idea to keep some chips off the table (e.g. tariffs, DOGE, etc.). Their views on China are that it will be impossible to handicap at this point (e.g. chip restrictions) - the proof is in the pudding with what hey have already been able to accomplish through DeepSeek, BYD, drones, and robotics despite semiconductor handicap. By the end of the year, the majority of code will be written by agentic AI - and if thats true, there will be even more demand for accelerated compute than what was originally predicted. This even includes and accounts for any efficiency gains from the DeepSeek discoveries. Net net, they are bullish on Nvidia still.

Dwarkesh AMA episode - Individual management bandwidth will be maximized over the next 4 years. You will essentially be able to manage vastly more resources than an individual could command today. Thats why its important to keep increasing your education and leverage of AI because you **wont** be replaced if you're able to do great work with the advanced technology that is available to you. This is similar to Satya's framing of knowledge work vs knowledge worker.

Kathy Wood on what comes next in AI and big tech (via Odd Lots) - There are 5 new technologies converging at the same time right now: robotics, energy storage, AI, blockchain technology, multiomic sequencing. Each one of those has its own S curve, and now they're going to be feeding one another.. Munger would probably consider this a lollapalooza effect.

Niall Ferguson on the Uncommon Knowledge - He suggests that the endstate for Ukraine that we should be striving for is that of the Korean ware, where although divided geographically, South Korea has flourished economically in the post war years. What we ought ot avoid however is Ukraine becoming similar to post-war Vietnam.

Scott Bessent Interview on All In - The administrations goal is to get the fiscal deficit to 3% of GDP by the end of 4 years. He emphasizes that Trump wants to avoid a recession so the cuts will be gradual (this point is moot after the 4/2 tariff announcement which is quickly leading us into a possible recession). De-regulations of banking is also a hi-priority for him - as regulators have been "tightening the corset". Overall Besset and team have a 3-pronged approach: 1) reduce government spending, 2) deregulation, 3) use of tariffs to rebalance trade relations.

Ezra Klein and Derek Thompson on Honestly w/ Bari Weiss - EK and DT have been doing a podcast tour to talk about their new book Abundance. They offer suggestions for the left to get out of its own way:

Having kids can be viewed in 2 different ways: 1) as a capstone later in life, or 2) as cornerstone earlier in life. Number 2 is preferable for a healthy and abundant society.

They describe the current dichotomy between the left and the right: the left won't make government work, the right tries to attack and destroy the government. The left misrepresents inputs (i.e. money) and process (i.e. regulation) with success instead of actual outputs and accomplishments.

They argue that most people are not necessarily upset with paying high taxes (in and of itself), but rather they're upset with getting so little in return (agree with this). People get really pissed off when they either see their tax dollars wasted.

Top Tweets

Your goal is NOT to prove you're smart. It's to make problems go away

More data will be made in the next three years than in all of preceding human history

From the archives, Andrej Karpathy on how to learn

Airports these days are a perfect picture of American affluenza

The most attractive person in every room is the one who needs the least, and gives the most

Books

Poor Charlies Almanack (4/5): A psychology book disguised as a business book that I wish I had access to earlier in life. After already reading the biographies of Einstein and Ben Franklin, it’s interesting to see how much insight Charlie draws from both historical figures. This book offers so much wisdom that its hard to fully summarize the key takeaways, however I can try: basic concepts and the big ideas (i.e. worldly wisdom) need to be taught better, build yourself a multi-disciplinary latticework of mental models you can hang ideas onto, always abide by the inversion principle, the efficient market hypothesis is a lie, good decisions can have poor outcomes, decision making is heavily influenced by unseen psychological drivers, in order for a man to be successful in life he must have good morals and solid principles, a mans reputation and integrity are his most valuable assets - and can be lost in a heartbeat, bet heavy when the worlds offers an opportunity, absence of evidence is not evidence of absence, always consider the net greater benefit and tolerate a little unfairness, avoid being ideological, opportunity cost and incentives are superpowers, avoid precision in naturally complex social domains and systems, use checklist routines, if you would persuade - appeal to interest and not to reason, bad accounting is a slippery slope, and its not greed that drives the world but envy.

A Wiseman's Fear (4/5): A gripping sequel to The Name of the Wind. Kvothe is a year older and seemingly 10x more powerful. After a long introduction where he stirs at the University trying to overcome daily academic, social, and financial challenges, he gets an opportunity to embark on adventures beyond the normal realm. These adventures test his morals and ethics, and challenge his ideas of identity and reputation. Its easy to get drawn-in by Kvothe’s quest for knowledge. Unfortunately, the story line lingers in some of the liminal spaces of Kvothes journey. He has a sluggish journey to find a group of bandits which eventually leads his long and drawn out interactions with Felurian in the Fae. However, in between these boring parts there is an epic battle with the bandits that was personally my favorite part of the book. There are so many loose ends to tie up in the 3rd book: why does Kvothe own and run an inn? What happened to all his friends from university? Why are there demons running around that need killing? Why has his lost most of his powers? What about the Chandrian?