August 2025 Assorted Links

GPT-5, Genie 3, Stablecoins,, UBI, Protein, Power Law, Tolkien, Taste–Skill, Whales

Artificial Intelligence

GPT5

After Sam Altman tweeted a picture of the Death Star ahead of the GPT5 release, euphoria for a giant leap forward in AI progress was at a fever pitch. The mood quickly turned sour after a somewhat botched release. One of the key launch failures was a bug that caused their new real-time router - responsible for deciding if a question requires reasoning - to malfunction. On top of that, many people felt abandoned by the deprecation of 4o. It turns out a portion of the user base developed deep emotional relationships with the model and felt OpenAI stripped them of a partner. Much of the actual progress narrative was buried beneath these negative headlines.

Ethan Mollick had a more optimistic initial take on GPT5… it just does stuff. He found the model better grasped his initial requests compared to previous models that required more careful guidance. He pointed out that another major achievement of this model was bringing reasoning capabilities to everyday users who otherwise would not have paid a monthly subscription for such features. Now that step-by-step reasoning is an out of the box feature, the full arsenal of capabilities are available to the world. According to him, we’ve officially entered the era of mass intelligence.

GPT5 also surprised Ezra Klein, whose initial impressions ran counter to the mainstream narrative that the release was a flop. He found that GPT5 was the first AI that actually felt like a true assistant. What he finds equally surprising is the speed at which AI models have percolated throughout our daily lives. In less than 5 years, these models weave through our lives as if they’ve always been there. Now, over a 1/3 of all Americans use AI every single day. It’s amazing how quickly science fiction becomes normal. What does this adoption rate imply about the future connection we’ll have on these tools? It’s hard to understate the significance, but he tries to here:

As the now-cliché line goes, this is the worst A.I. will ever be, and this is the fewest number of users it will have. The dependence of humans on artificial intelligence will only grow, with unknowable consequences both for human society and for individual human beings. What will constant access to these systems mean for the personalities of the first generation to use them starting in childhood? We truly have no idea. My children are in that generation, and the experiment we are about to run on them scares me.

Maybe the answer is to view AI as just an ordinary technology, one thats similar to other daily drivers that we rely on (internet, ecommerce, etc.)

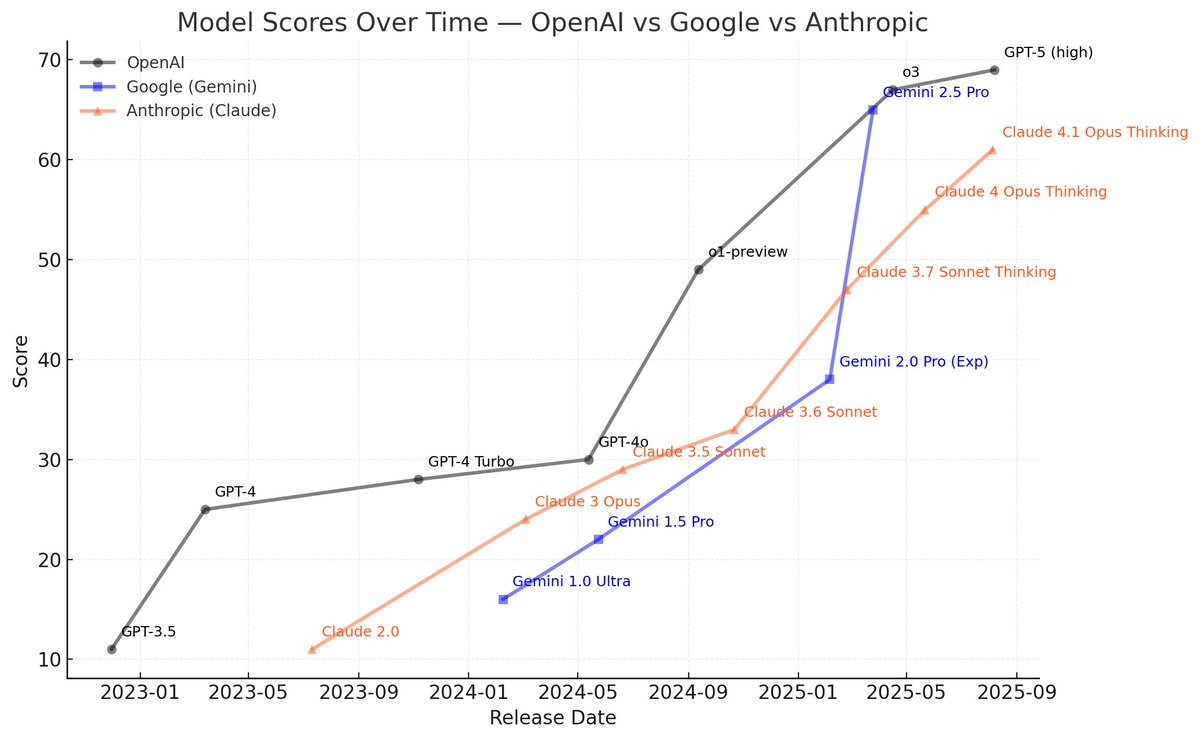

The anticlimactic release of GPT made people start wondering about what happens if AI doesnt get much better than this? There’s now a perception that cracks are forming in the once worshipped scaling law that guided the huge performance leaps from GPT2 through GPT4. Once diminishing returns to scaling appeared, companies shifted focus to new ways for improving performance. The most notable new paradigms have been post-training reinforcement learning techniques (including step-by-step reasoning capabilities). These techniques are targeted improvements, but don’t result in giant leaps that we witnessed from previous models who originally benefitted from the front-loaded payoff curve of scaling compute.

This isn’t to say that we shouldn’t keep scaling, or that these models will not have large societal impacts, but that we could approach a runaway intelligence explosion (most vividly described in AI 2027) with more skepticism.

Back in January, the release of DeepSeek R1 created an overblown AI doomer panic, as people were caught off guard that such a powerful open-source Chinese AI model that could rival the best US closed-source models. This was coined the “DeepSeek moment”. Zvi Mowshowtiz believes the lackluster GPT5 release has created an opposite reaction - a reverse DeekSeek moment - a misguided complacency that new models will only be marginally better than their predecessors, that we are reaching the top of the S-curve, that we don’t have to worry about GPU export controls on China, and that AGI is now in a far distant future. In fact, AI progress is still rapidly accelerating.

General

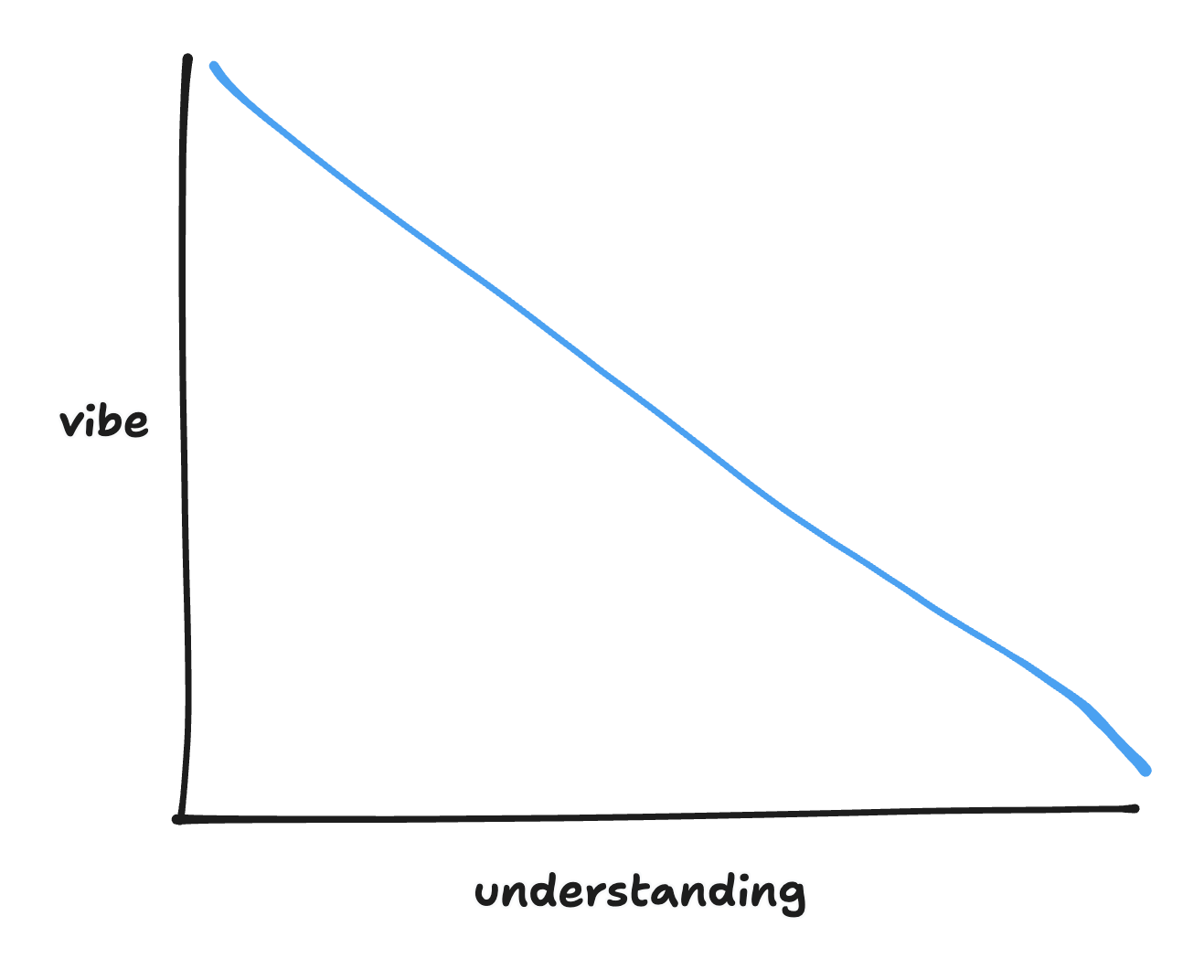

LLMs can’t build software effectively because they lack the ability to form mental models the same way software engineers do. Similarly if you are vibe coding something, then you probably don’t fully understand what is going on. This is completely fine for prototyping, where accumulating tech debt doesn’t matter, but should be used carefully while building important applications.

As intelligence becomes an commodity, being more knowledgable than your coworker will not be a differentiator for success. Instead what you should be striving for is wisdom and integrity. Wisdom comes in 3 core skills: emotional clarity, discernment, and connection. Of those 3, discernment might be the most critical. The marginal cost of creating new outputs from AI will be practically zero, so the savvy employee will be the one who is able to discern what should (or shouldn’t) be prioritized.

There’s a temptation to increasingly automate workflows via AI. Normally there’s nothing wrong with that, however, it’s important to weigh the downside risks for when automations don’t go according to plan. This is especially true for areas with huge downside risks - like aid-based governmental programs. It’s easy to forget that AI is still a relatively novel innovation - one that will have growing pains over the coming years. Until we can safely rely on AI to make all our decisions and run all of our processes - and thats a big assumption - a best practice principle should be to keep humans in the loop to ward off automation failures.

There are 2 methods for reasoning - inductive vs. deductive. Deductive reasoning takes known axioms and builds off of them to reach a new idea. Inductive reasoning relies on specific observations to reach a general conclusion. Einstein, Hume, and Popper were outspoken proponents of deductive reasoning and found inductive reasoning inherently flawed. In the same vein, AI’s are currentlyonly as smart as their training data and tools that they can access. As we increasingly offload workloads to them, we need be cautious about the limits of their inductive reasoning.

Google

August was a landmark month for Google and Gemini launches. The top 3 included: Gemini Storybooks, Nano Banana, and Genie 3.

Gemini Storybooks takes a user prompt and turns it into a 10-page animated book. Although most likely meant for kids, it’s still incredibly entertaining for adults.

Nano Banana (Gemini 2.5 Flash Image) became the de facto AI image editing app. A deluge of Nano Banana viral content overwhelmed X after the release.

But by far the most impressive release was their new world building model called Genie 3. This model builds totally interactive artificial landscapes based on a user text prompt. Its so realistic that it was the first time I’ve actually considered that we might be living in a simulation.

Crypto

US Secretary of Treasury Scott Bessent wrote an op-ed which detailed the roadmap to making America a crypto superpower. With the passing of the GENIUS act in July, there’s finally regulatory clarity and certainty surrounding stablecoins that businesses were yearning for. The act legitimizes stablecoins in the eyes of the government. I dont know if the significance of that can be understated. Bessent’s hypothesis is that demand for stablecoins will lead to demand for treasury bills, which back stablecoins, and in turn will lead to a reduction in the US debt.

The rise and redemption of stablecoins

The US Administration… goal is twofold: to protect the US dollar’s global dominance by expanding its use on digital platforms worldwide; and to reduce borrowing costs by increasing demand for US Treasuries through stablecoin reserve holdings.

The influential economist Kenneth Rogoff is an advocate of crypto payment technologies and stablecoins, but worries about their unintended consequences (tax evasion) and 2nd order effects to developing countries (illicit and untraceable use). Europe should probably be the most worried of all. Once dollar backed stablecoins takeover, how likely is it that Europe will be able to provide a better alternative? Unlikely.

Brian Armstrong, via John Collison's podcast, shares his thoughts on the GENIUS act, stablecoin adoption, adapting Coinbase’s business strategy over time, and provides a pretty shocking Bitcoin price prediction of $1M per coin by 2030.

Business

Founder of Gumroad, Sahil Lavingia, reflects on his “failure” to build a billion dollar company. He found solace in the fact that although he never built the big company he always dreamed about, Gumroad became essential to the livelihood of creators.

I stumbled upon Alexandr Wang’s old blog. His philosophy on interviewing is to determine if someone really gives a shit. His prescription for being an effective leader is to do too much (reminds me of Frank Slootman’s amp it up credo).

Econ

Giving people money is less helpful than you’d think. Multiple large, high-quality randomized studies have found that guaranteed income transfers do not appear to produce sustained improvements in mental health, stress levels, physical health, child development outcomes, or employment. Participants typically work a little less, but this doesn’t correspond with either lower stress levels or higher overall reported life satisfaction. There are targeted and narrow use cases where giving away does make a difference, such as to domestic violence victims or to pregnant women. However, it’s not going to deliver significant changes for chronic poverty. That requires building and improving institutions that provide education, health care, and housing.

Scott Sumner’s wrote his last econlog post. His lesson: integrity is more important than anything else.

America’s “The Number Go Up” rule implies that the only metric that matters is the value of the S&P 500. As long as the stock market keeps rising, we are doing something right. Obviously this is a hasty overgeneralization, and ignores social progress markers that are not included in corporate earnings, but you could do worse for an overall country level metric. However, the author believes theres something more sinister lurking behind this rule. His belief is that corporations shouldn’t charge too much, or too little - that like goldilocks they should charge just the write about. If not, their excessive returns are “extractive”. He believes that “The Number Go Up: rule drives corporations and society towards evil. In my opinion, this is a fundamental misunderstanding of capitalism. As a simple rebuttal, if an incumbent business starts to charge too much, its reasonable to believe a competitor will come in and compete that “excess” away.

Parenting

Michelle Cyca on the benefits of practicing “obstacle parenting”. Obstacle parenting means that you should make your child’s life slightly more uncomfortable in certain situations where it will be ultimately be constructive. It implies that you should resist the urge to intervene when your child is trending towards a meltdown, and definitely don’t use technology as a soothing mechanism. As a general rule, you should avoid giving your kids anything that is inherently addictive. All of this I agree with. But there’s a central argument that Cyca makes that I think is flat out wrong - that Generative AI in and of itself is bad for kids. It obviously depends how it is used. Just because an LLM can finish rudimentary homework in seconds does not absolve the teacher from assigning a stupid assignment.

Science and Technology

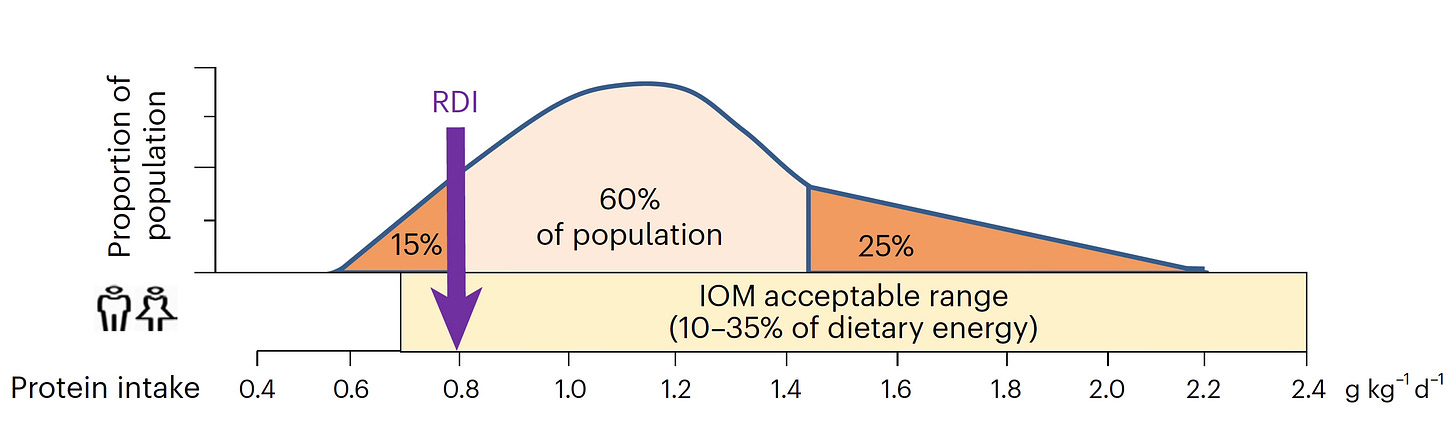

Everybody and your mom are consuming more protein than ever before but is this actually a healthy trend? Leading health “influencers” like Andrew Huberman and Peter Attia recommend consuming at least 2.2g/kg/day - far more than the 0.8 g/kg/day recommended daily intake (RDI). Data shows that 85% of the US population already consumes more than RDI and nearly 25% are consuming twice the RDI. This essay goes even further by claiming there’s no scientific evidence that shows high protein intake (up to a point) is good for you, or that has a significant effect on building muscle, even if you’re strength training. But the paradox is that serious weightlifters religiously consume protein and show significant results. So who do you believe - the experts or the athletes?

Philosophy

Seriously motivated people, who find meaning in what they do, make up a relatively small share of the working population. They don’t work hard for social validation. If compared to the average “4-hour lifer” (i.e. basically the entire working class) it would appear that no one is even trying. The lesson is that you need to take personal accountability for what you want out of your life, and deliberately increase your effort to get there. If you’re lucky, maybe you’ll find the intersection between personal meaning and work.

Companies pay young professionals high wages for a number of reasons, none of which are because the company believes the average young professional is deserving of such a high salary. In reality, companies don’t want to miss out on the outliers, whose cracked productivity alone makes up for the rest of the pack. This is a classic power law situation.

A recent found that 88% of students fake wokeness to fit in.

Ever felt immediately inspired by a new project idea that came to you in the shower? Only to let the vision slip away as soon as you realize the effort required to make it a reality? It turns out theres a cognitive science term for this called the taste-skill discrepancy, which basically says: “Your taste (your ability to recognize quality) develops faster than your skill (your ability to produce it)”. Even if you start on a new endeavor, the true test is getting past “the quitting point”. The key to overcoming this ambition impediment is to lower your expectations. Don’t let perfect be the enemy of good. Use past failures constructively. They’ll reduce the structural tension between imagination and reality.

On Writing

Why Tolkien’s literature is revered by both the left and the right. Short answer is because it’s simply the best.

Miscellaneous

Profile on one of the oldest living recipients of a Frank Lloyd Wright designed homes

Spending time and learning life lessons from the Totteridge Yew, London's oldest tree

Chronicles of the daily life in Nagasaki before the atomic bomb was dropped

Scientists are using drones to tag sperm whales for study. This is supporting efforts to record more whale audio in the hopes that we will eventually be able to decode whale songs through AI. How cool is that?

New research is revealing that single cells can keep a record of their experiences for what appear to be adaptive purposes

Top Tweets

The reactions to Genie 3 came pouring in after launch (1, 2, 3, 4)

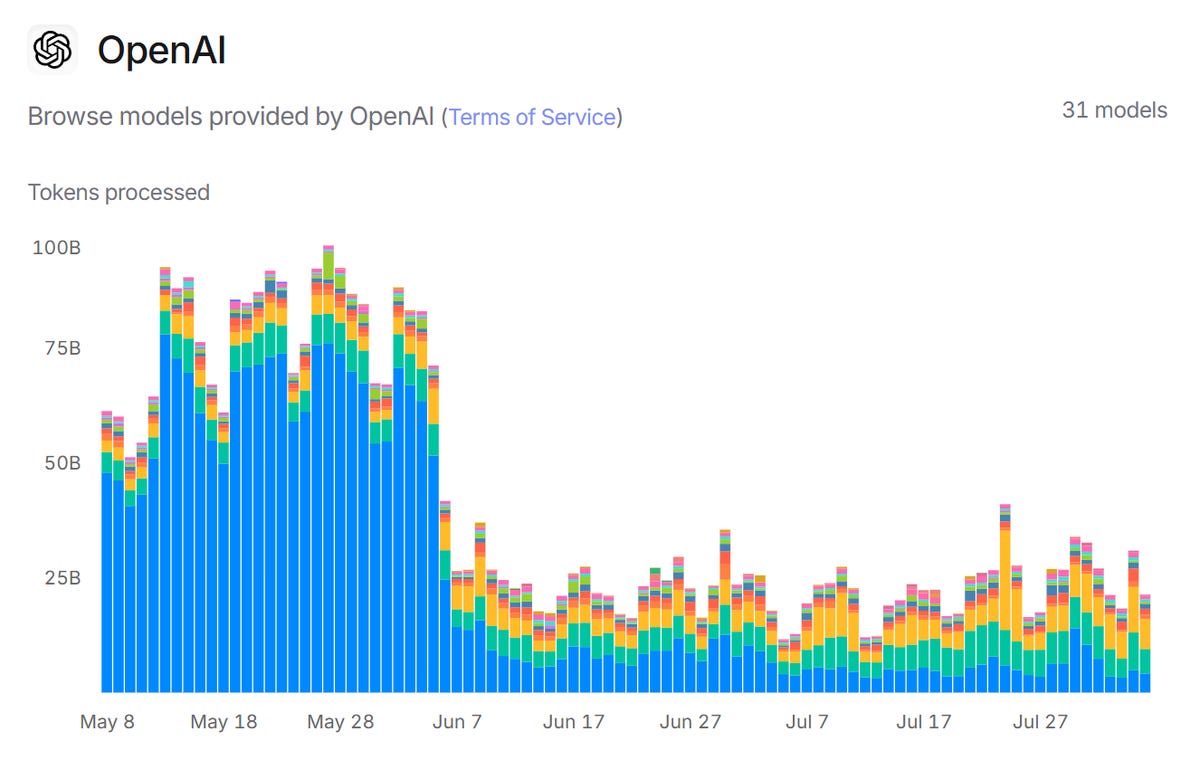

The number of tokens processed by ChatGPT fell off a cliff after the school year ended

People willing to pay 50% more for Waymo than Lyft, despite longer waiting times

Breakdown of the tactics Justin Rose used on the last hole to win the St. Jude championship

If the task length an AI can reliably finish doubles every 7 months, we’re in for a crazy future

The hype around creatine is growing as fast as the number of reported benefits